Combining two images, especially useful, if the overlay image has an alpha value:

//

// UIImage+Category.h

// ImageOverlay

//

// Created by Georg Tremmel on 29/04/2010.

//

#import

@interface UIImage (combine)

- (UIImage*)overlayWith:(UIImage*)overlayImage;

@end

And the implementation file.

//

// UIImage+Category.m

// ImageOverlay

//

// Created by Georg Tremmel on 29/04/2010.

//

#import "UIImage+Category.h"

@implementation UIImage (combine)

- (UIImage*)overlayWith:(UIImage*)overlayImage {

// size is taken from the background image

UIGraphicsBeginImageContext(self.size);

[self drawAtPoint:CGPointZero];

[overlayImage drawAtPoint:CGPointZero];

/*

// If Image Artifacts appear, replace the "overlayImage drawAtPoint" , method with the following

// Yes, it's a workaround, yes I filed a bug report

CGRect imageRect = CGRectMake(0, 0, self.size.width, self.size.height);

[overlayImage drawInRect:imageRect blendMode:kCGBlendModeOverlay alpha:0.999999999];

*/

UIImage *combinedImage = UIGraphicsGetImageFromCurrentImageContext();

UIGraphicsEndImageContext();

return combinedImage;

}

@end

An update to 334 Combining Images with UIImage & CGContext – (Offscreen drawing)

(Did I say, how much I love Categories...?)

Update

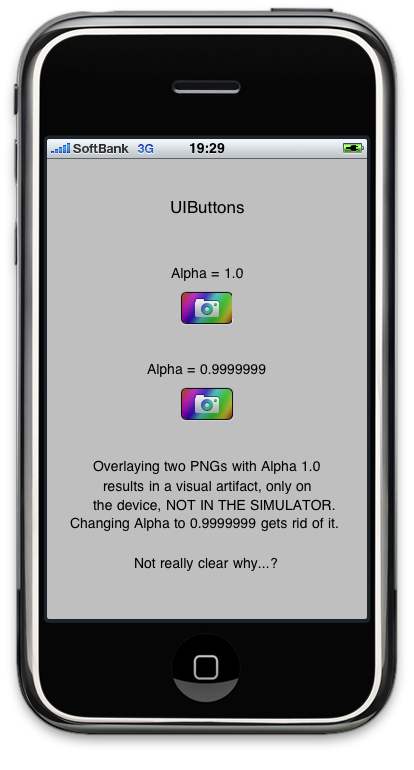

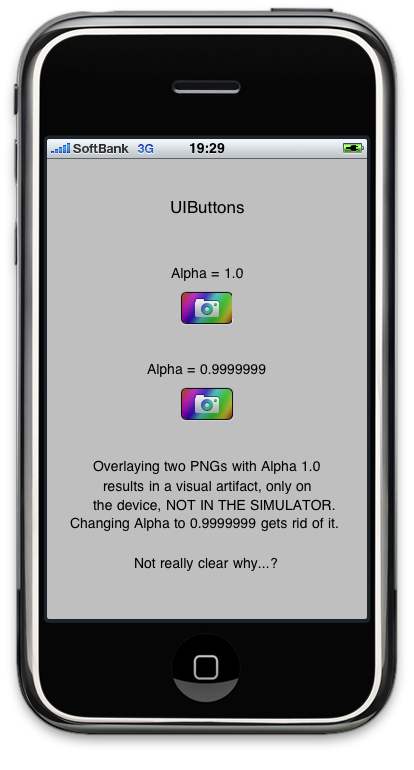

I came across some strange behaviour when layering a PNG image with transparency over another image. Did not show up in the Simulator, only in iPhone 3GS (and probably also on other devices.)

The base image draws fine, but the overlay image appears to be truncated and the last pixels shifted, producing some bright green artifacts.

Changing

[overlayImage drawAtPoint:CGPointZero];

to

CGRect imageRect = CGRectMake(0, 0, self.size.width, self.size.height);

[overlayImage drawInRect:imageRect blendMode:kCGBlendModeOverlay alpha:1.0];

did not really help; the green artifacts remainded. It was strange though, that they did not appear in the other blendmodes. Using

CGContextDrawImage(c, imageRect, [overlayImage CGImage]);

would also work, but then the images turn up upside down. Not what I really needed. (Yes, I know, there might not be a hard fix for that, but really - it should be that complicated.)

After playing a bit more with the values, I found, that setting alpha lower than 1.0 gets rid of the display artifact:

[overlayImage drawInRect:imageRect blendMode:kCGBlendModeOverlay alpha:0.9999999];

Bug filed at Apple's Bug Report, let's see. Or maybe am I missing something here?

Anyway here they the files are, zipped and ready for download.

Post Scriptum

Test Project, showing the visual artifact in action. Only appears on the device, NOT IN THE SIMULATOR.